Marcus runs a mid-market B2B HR software company. His conversion team is good. They’ve run dozens of A/B tests. They’ve tightened the checkout flow, reduced form fields, rewritten headlines, sharpened CTAs. His conversion rate sits at 2.3%, which is respectable. Traffic is stable. SEO rankings are holding.

And yet, something is quietly wrong. Inbound leads take longer to engage. Demo requests have dipped despite traffic holding. The sales team keeps reporting that prospects arrive with strong, pre-formed views about the product, sometimes accurate, sometimes not, and seem impatient with anything that doesn’t immediately confirm what they already believe.

What Marcus is experiencing isn’t a CRO failure. It’s a context problem. And it’s one that traditional conversion optimisation was never designed to solve.

The rise of AI search has fundamentally changed something upstream of the website visit. When a buyer types a question into ChatGPT, Perplexity, or Google’s AI Overview before clicking through to a product page. They arrive carrying a synthesised worldview, a mental model of how the category works, who the credible players are, what the trade-offs look like, and often a shortlist already forming.

The website visit is no longer the beginning of their journey. In many cases, it is the final checkpoint before a decision is made.

That changes everything about what conversion rate optimisation needs to do.

The Scale of the Shift

Before getting into the mechanics, it’s worth sitting with just how significant this change is.

About 50% of Google searches already include AI summaries, a figure expected to rise above 75% by 2028. Half of consumers now intentionally seek out AI-powered search engines, with a majority calling it their top digital source for buying decisions. According to McKinsey’s October 2025 consumer research, 44% of AI-powered search users say it’s their primary and preferred source of insight, outranking traditional search at 31%, retailer websites at 9%, and review sites at 6%.

The trajectory on the supply side is equally striking. Daily AI search users in the US rose from 14% in February 2025 to 29.2% in August 2025, according to a HigherVisibility report, a near-doubling in six months. Generative AI traffic is growing 165x faster than organic search traffic.

The key implication for conversion practitioners: AI Overviews and zero-click results have pushed us into a world where the search result is now the answer itself. If a brand isn’t mentioned or cited in that instant, it effectively doesn’t exist, at least for that segment of buyers.

For the growing share who do click through from an AI-generated response, they are not browsing; they have already processed comparative information and are arriving with a level of intent traditional analytics can’t fully capture or explain.

The Pre-Formed Visitor

For most of the history of digital marketing, the website was where discovery happened. Visitors arrived with questions and looked to your content for answers. CRO developed around this reality: present the value proposition clearly, reduce friction, build trust, guide visitors through a funnel from awareness to action.

AI search collapses that model in a very specific way. When a prospective customer uses an AI engine before visiting your site, two things happen simultaneously that traditional CRO frameworks don’t account for.

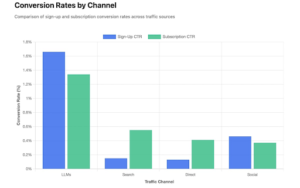

First, they arrive further along the funnel than your analytics suggest. Research on AI-referred traffic has found consistently elevated engagement and conversion intent across multiple data sets.

Microsoft Clarity, analysing traffic across 1,200 publisher and news sites, found that AI-driven referrals grew 155% over eight months and converted at up to three times the rate of traditional channels such as search and social.

Visitors from large language models stayed 30% longer than Google visitors, indicating higher engagement and purchase intent.

Ahrefs’ own data found AI search platform visitors generated 12.1% of signups despite accounting for only 0.5% of overall traffic.

The mechanism behind this is not mysterious. AI-referred visitors convert at dramatically higher rates because they arrive pre-educated.

By the time someone clicks through a ChatGPT citation, they are not browsing, they are buying.

Second, and less discussed, they arrive with specific pre-formed beliefs that your page may directly contradict.

Consider three visitors landing on the same B2B software product page on the same afternoon:

- Visitor A arrived via traditional Google search. They’re in early discovery. They want to understand the category and assess credibility. They need education before they can convert.

- Visitor B asked ChatGPT to compare the top three solutions in this category. The AI named your product favourably and summarised its key differentiators. This visitor arrives pre-validated. They’re looking for confirmation. They’re close to converting, but will bounce immediately if the page contradicts what the AI told them.

- Visitor C asked Perplexity a question and your product appeared in an AI summary that also highlighted a competitor more prominently. They arrive with a latent preference for the competitor. What might convert this visitor is directly addressing the comparison, not ignoring it.

All three look identical in your analytics. All three came from organic search. All three landed on the same page. But they need completely different experiences to convert.

Traditional CRO, optimising a single page variant for an aggregate population, is structurally incapable of serving this reality.

The Cognitive Science Behind the Context Gap

There’s a well-established body of research explaining why expectation mismatch creates such a damaging conversion failure. Cognitive Dissonance Theory, first proposed by Leon Festinger in 1962, holds that people experience psychological discomfort when their beliefs and observations conflict with each other.

In technology adoption contexts specifically, the negative disconfirmation of initial beliefs about a product’s characteristics and performance is expected to result in dissatisfaction and discontinuous use intention.

This is the mechanism behind what we call the context gap: the space between the mental model the visitor arrived with and what the page actually delivers. The larger that gap, the higher the abandonment rate, regardless of how well-optimised the page is by conventional CRO metrics.

The anchoring effect compounds this further. Anchoring bias occurs when people base their perspective too heavily on the first pieces of information they receive when making decisions, which can cause them to overestimate or underestimate the value of a product due to their initial impression.

When an AI engine creates the first impression of your product, before the visitor has ever seen your homepage, that impression becomes the anchor against which everything on your page is evaluated. If the anchor and the page are misaligned, conversion suffers in ways that no amount of button-colour testing will fix.

The GEO-CRO Loop: One Problem, Not Two

Here is the connection that most marketing teams are missing: your ability to convert AI-mediated visitors is directly shaped by how AI engines characterise your product. And how AI engines characterise your product is shaped by the quality and structure of your own content. This creates a loop. GEO and CRO are no longer separate disciplines. They are two phases of the same cycle.

The landmark research establishing this came from Princeton University, Georgia Tech, The Allen Institute of AI, and IIT Delhi. Their paper, GEO: Generative Engine Optimization, found that adding relevant statistics, quotations and citations can boost content visibility in generative engines by up to 40%.

The nine-method framework they tested, from authoritative tone and cite-source optimisation to statistics addition, demonstrated that including citations, quotations from relevant sources, and statistics significantly boosts source visibility in generative engine responses. Critically, this optimisation is not neutral: it shapes what the AI says about you, not just whether you appear.

When you publish high-authority, well-structured content that AI engines cite, you influence the narrative those engines build about your product. When that narrative accurately reflects your real differentiators and ideal use cases, the visitors AI sends you arrive with matched expectations.

The reverse is equally true and more common. If your content is thin, generic, or organised in ways that AI engines can’t parse effectively, the AI builds an inaccurate or incomplete picture of your product. A brand’s own sites only comprise 5 to 10% of the sources that AI-powered search references; AI pulls from a broad and diverse array of sources including affiliates and user-generated content.

Domains with millions of brand mentions on platforms like Quora and Reddit have roughly 4x higher chances of being cited by ChatGPT than those with minimal activity. Domains with profiles on platforms like Trustpilot, G2, Capterra, and Yelp have 3x higher chances of being chosen as a source. This is not just a traffic problem. It is a context-shaping problem.

Only 16% of brands today systematically track AI search performance. For the other 84%, the AI is building a narrative about their product in the dark, and that narrative is actively shaping conversion outcomes they’re attributing to entirely the wrong causes.

Why Traffic Volume Is Now the Wrong Variable to Optimise

For most of the past decade, the dominant CRO lever has been traffic quality and volume, getting more of the right people to the page, then optimising the page to convert them. This logic is being disrupted in two simultaneous directions.

On volume: AI Overviews now reduce clicks by 58%, with organic CTR for queries where an AI Overview is present having dropped 61% year-over-year from June 2024 to September 2025. The traffic that used to flow to your page from informational and research queries is increasingly staying inside the AI interface. More searches are resolving without a visit.

On quality: The clicks that do happen are increasingly high-intent and context-loaded. Analysis of nearly two million LLM-driven sessions across twelve months found AI traffic concentrated on decision pages, industry pages, tools, and pricing pages showing 4-9x higher AI presence than the 0.13% site average. These are not exploratory visitors. They are buyers at or near the decision point, arriving having already done significant research inside the AI.

Microsoft Advertising reports that Copilot-powered journeys are 33% shorter and 76% more likely to lead to lower-funnel conversions. Shorter journeys with higher conversion probability means the on-site experience has to do much more in much less time, and it has to do it for a visitor who already has strong prior beliefs.

A Framework for Context-First CRO

Addressing the context gap requires working upstream of the page. Before optimising any element of your site, context-first CRO asks: what has the visitor already been told? What expectations are they carrying? And how do we build an experience that meets them where they are?

This framework operates across three interconnected pillars.

Pillar 1: AI Narrative Auditing

Before you can align your site experience to visitor expectations, you need to understand what those expectations are. This requires systematically auditing how AI engines characterise your product, your category, and your competitors.

Practical AI narrative auditing means querying ChatGPT, Perplexity, Google’s AI Overview, and Gemini with the questions your buyers are most likely to ask, both category-level questions (“What’s the best [category] for [use case]?”) and direct comparison questions (“How does [your product] compare to [competitor]?”). Document what comes back: the language used, the features highlighted, the use cases associated with your brand, the caveats raised, and critically, whether you appear at all.

Then compare that picture to what your homepage and key landing pages communicate. The gaps between the two are your highest-priority CRO opportunities, not because the page copy is bad, but because the page is answering a different question than the one the visitor arrived with.

Domain-specific optimisation matters here: different GEO methods perform better in certain contexts. Authoritative language improved historical content; citation optimisation benefits factual queries; statistics enhance law and government topics. Understanding which content signals shape your specific AI narrative tells you exactly where to invest.

Pillar 2: Entry-Point Personalisation Based on Pre-Visit Context

Once you understand the primary AI narratives driving your inbound traffic, you can build experiences that match those narratives. This goes beyond standard UTM-based landing page personalisation.

Referral data, query strings, and early behavioural signals combine to reveal likely pre-visit context. A visitor arriving from a search term containing “alternative” or “vs [competitor]” has almost certainly already seen AI content framing that comparison. The right experience for them is not your generic homepage, it’s a page that directly enters the conversation already in progress.

In SaaS, AI discovery depends on clearly understanding which pages drive consideration and brand resonance, and tightly connecting that journey to transparent, comparable pricing signals. In e-commerce, structured and comparable product data across product detail pages powers AI-led comparison and shortens decision cycles. The specific implementation varies by sector, but the principle is consistent: use what you can infer about the visitor’s AI-mediated pre-visit journey to shape the entry experience.

Pillar 3: Expectation Confirmation Architecture

Traditional page design focuses on guiding context-free visitors toward a conversion action. Expectation confirmation architecture starts from a different question: within the first ten seconds on this page, can a visitor who arrived with context X confirm they are in the right place?

This reframes every element of page design. The hero headline is no longer primarily about communicating your value proposition from scratch. It’s about mirroring the frame the AI established. If AI consistently describes your product as “built for security-conscious enterprises,” your hero headline should reflect that language immediately. The visitor who arrives carrying that expectation should feel confirmed within seconds, not after scrolling through generic benefit statements.

Social proof placement follows the same logic. Visitors believe what they believe, and for the best results you need to cater to their preconceptions through familiarity and consistency rather than trying to alter those ideas and beliefs. An enterprise visitor primed toward your product wants enterprise logos and compliance certifications first. A mid-market visitor who arrived having read an AI summary about your ease-of-implementation wants onboarding timelines and time-to-value case studies. Getting this ordering wrong doesn’t just fail to convert, it creates doubt about whether the AI characterised you accurately, which creates doubt about you.

The Tests That Move the Needle in an AI-Search World

The framework above requires strategic investment. But several high-ROI tests can be deployed quickly and will consistently outperform standard CRO experiments in an AI-mediated traffic environment.

Test 1: The AI Mirror Headline Test. Audit the language AI engines use most consistently when describing your product across ChatGPT, Perplexity, and Google’s AI Overview. Extract three to five recurring phrases. Then test headline variants on primary landing pages that echo this language against your current headlines.

Test 2: Comparison-Aware Landing Pages. Identify your top three competitor comparison search terms and build landing page variants that acknowledge the comparison context directly rather than ignoring it. They name the comparison, acknowledge legitimate differences, and make a clear case for why your product is the right choice for a specific buyer type.

Test 3: Context-Triggered Trust Signal Sequencing. Different AI narratives prime visitors to look for different trust signals. A visitor primed toward “enterprise-grade” wants security certifications and compliance badges. A visitor primed toward “fastest implementation” wants onboarding metrics and time-to-value case studies. Test dynamic trust signal sequencing that reorders and prioritises social proof based on inferred visitor context, source, query parameters, and early behavioural signals. The control condition is your current fixed sequence.

Test 4: Friction Calibration by AI Intent Score. B2B companies where 95% of buyers plan to use generative AI in at least one area of a future purchase are not uniform in their readiness to convert. Visitors arriving from high-specificity AI-mediated searches (long-tail, comparison-oriented, product-specific) have processed far more information before arriving and should be shown shorter, faster paths to conversion, fewer fields, a more direct CTA, less educational preamble. Visitors arriving from broad category searches should receive the fuller educational experience.

The Measurement Problem – and How to Work Around It

Context-first CRO introduces a genuine measurement challenge. When a visitor consults AI, forms an opinion, and then arrives at your site, the conversion that results is partly attributable to the AI interaction, and your analytics will credit none of it. You’ll see an organic visit that converted. You won’t see the AI conversation that shaped it.

As more of the journey happens upstream inside AI experiences, understanding those early signals has become essential. Brands need visibility into where content is being surfaced, how users interact with it, and how those interactions translate into on-site behaviour. Several practical approaches address this.

Segment conversion analysis by query characteristics. Visitors arriving from longer, more specific queries are disproportionately likely to have used AI search as part of their journey. Tracking conversion rates for long-tail, comparison-oriented query segments separately from short-tail traditional searches reveals the performance gap that AI context creates, and allows you to measure the impact of context-closing interventions.

Implement qualitative research directly into high-value conversion flows. A single survey question, “How did you first research solutions in this category?”, asked immediately after form completion generates signal that quantitative analytics cannot. Over time, the proportion citing AI tools gives you a direct measure of AI-mediated traffic share, which you can correlate with downstream metrics like close rate and time to close.

Treat AI citation audits as a leading indicator. Track how frequently your product is mentioned in AI responses to category queries, how it’s characterised, and whether that characterisation is improving over time. In an AI-first search environment, share of AI citation is a proxy for future inbound conversion opportunity in the same way search ranking position was a proxy for traffic opportunity in the traditional SEO era. When your brand is cited in an AI Overview, organic CTR is 35% higher, but the downstream effects on conversion quality compound further than raw CTR implies.

How Concinnity Implements Context-First CRO

At Concinnity, we’ve built our CRO practice around the intersection of AI search and conversion optimisation, because we believe addressing one without the other is increasingly the definition of leaving revenue on the table.

Our starting point is always the AI narrative audit: a systematic analysis of how your product, category, and competitors are characterised across every major AI search engine, mapped against your current on-site experience to identify context gaps by segment and priority.

This tells us not just where your conversion funnel leaks, but why, specifically, where the story the AI is telling about you and the story your page tells are in conflict.

We then design and run the tests with the strongest track record in an AI-mediated traffic environment: AI mirror headline tests, comparison-aware landing pages, context-triggered trust signal sequencing, and entry-point personalisation informed by pre-visit context inference.

Our teams combine CRO expertise with GEO strategy so that every conversion optimisation also feeds back into better AI characterisation, creating the compounding loop that separates sustainable revenue growth from one-time conversion lifts.

We establish clear baseline metrics before any work begins, set realistic improvement targets based on your industry and traffic patterns, and track progress weekly to ensure AI-mediated context alignment delivers measurable outcomes, not just activity.

Get in touch for a free context-gap audit and discover how much conversion potential is currently being lost in the space between what AI tells your visitors and what your pages deliver.

FAQ

- Does context-first CRO replace traditional CRO?

No. Traditional CRO principles – reducing friction, building trust, improving clarity, remain valid and important. Context-first CRO adds an upstream layer: before optimising the page itself, it asks what expectations the visitor arrived with.

- How do I find out what AI is actually saying about my product?

Manual querying is the most practical starting point. Use ChatGPT, Perplexity, Google’s AI Overview, and Gemini with the questions your buyers most commonly ask, category questions, use-case questions, and direct competitor comparisons.

- How long does it take to influence how AI characterises my product?

For real-time search-integrated tools like Perplexity, well-structured new content can shift characterisation within weeks of publication. For models with longer training cycles, the lag may be three to six months. Initial GEO visibility changes can appear within two to four weeks, faster than traditional SEO’s three to six month timeline, but building sustained authority takes longer as external mentions compound over time.

- What traffic volumes do I need before this matters?

The AI narrative audit is valuable regardless of traffic volume because it shapes content strategy and GEO direction. For specific A/B tests, standard requirements apply, enough monthly conversions to reach statistical significance within a reasonable test duration. As a practical threshold, businesses with at least 5,000 monthly visitors and 50+ monthly conversions can run meaningful context-first tests. Below that level, qualitative research, session recordings, and user interviews become the primary diagnostic tools.

- Can AI characterisation hurt conversion rates even when my SEO rankings are strong?

Yes. This is one of the most important points for marketing leaders to understand. Strong SEO rankings provide traffic but don’t protect against context gaps created by AI characterisation. It is entirely possible to rank on page one for a competitive keyword while AI engines simultaneously mischaracterise your product in ways that prime visitors with misaligned expectations.

- Does this work for e-commerce as well as B2B?

Yes, though the mechanics differ. In B2B, AI-primed visitors arrive with category framing and vendor comparisons that shape long consideration cycles. For e-commerce, structured and comparable product data across product detail pages powers AI-led comparison and captures users with purchase intent already formed.

References

- Aggarwal, P., Murahari, V., Rajpurohit, T., Kalyan, A., Narasimhan, K., & Deshpande, A. (2024). GEO: Generative engine optimization. Proceedings of the 30th ACM SIGKDD Conference on Knowledge Discovery and Data Mining, pp. 5–16.

- McKinsey & Company. (2025, October). New front door to the internet: Winning in the age of AI search.

- Previsible. (2025). 2025 state of AI discovery report: What 1.96 million LLM sessions tell us about the future of search.

- Seer Interactive. (2025, June). LLM traffic conversion rate data. Cited via Position Digital: AI SEO statistics.

- Adobe Digital Insights. (2025). The explosive rise of generative AI referral traffic.

- Microsoft Clarity & Microsoft Advertising. (2025, November). How AI search is changing the way conversions are measured.

- Omnius. (2025, August). GEO industry report 2025: Trends in AI & LLM optimization.

- eMarketer. (2026, January). How experts say GEO, AI will change discovery in 2026.

- Ahrefs. (2025, June). AI search vs Google: Real 2025 traffic data. Cited via RankScience: AI search vs Google traffic data.

- Semrush. (2025, July). LLM visitor conversion rate analysis. Cited via GrowthMarshal: AI search traffic is 4x more valuable than organic.

- Festinger, L. (1962). A theory of cognitive dissonance. Stanford University Press. Referenced in: Tsourela, M., & Roumeliotis, M. (2020). Cognitive dissonance in technology adoption: A study of smart home users. Computers in Human Behavior.

- Dataslayer. (2025). Generative engine optimization: The AI search guide.

- SEO.ai. (2024). Generative engine optimization (GEO) and how to optimize for AI search results [Princeton study].

- Search Engine Land. (2025, October). ChatGPT, LLM referrals convert worse than Google Search: Study.