Sarah is the head of e-commerce at a mid-sized retail brand. Last quarter, her team deployed an AI personalization engine that cut customer acquisition costs by 28% and pushed email click-through rates to their highest point in three years. The board was delighted. Then, one morning, she opened her inbox to find a message from a long-time customer: ‘I don’t know how you knew I was looking for a divorce lawyer, but the ad you sent me last night was terrifying. I’m unsubscribing from everything.’

Sarah’s story is not unusual. It sits at the fault line of one of the defining tensions in modern marketing: the more precisely AI can personalize an experience, the more it reveals about what it knows, and the more unsettling that knowledge can feel to the person on the receiving end.

This tension is not a technical glitch or a PR problem to manage around. It is a fundamental marketing dilemma that will define competitive advantage, regulatory risk, and brand equity for the next decade. And, as we have explored in our earlier work on how AI is reshaping conversion rate optimization and search visibility, the brands that understand this dynamic earliest will be the ones best positioned to act on it.

Our piece on AI for CRO: How Predictive Models and Simple Tests Unlock Hidden Revenue examined how AI micro-segmentation is transforming conversion funnels. This article examines the ethical and commercial consequences of that same capability. Our GEO vs SEO: The New Rules of Being Found Online in 2026 piece is also directly relevant: consumers increasingly use AI search precisely to avoid the tracking infrastructure of individual websites.

The Promise: Why Hyper-Personalization Has Become Table Stakes

The commercial case for AI personalization is, at this point, overwhelming. McKinsey’s research consistently shows that companies with superior personalization capabilities generate between 5 and 15 percent higher revenue than industry averages, and that leading firms derive 40 percent more revenue from their personalization activities than slower-moving peers. AI-powered recommendation engines alone can drive up to 26 percent revenue uplift in sessions where customers actively engage with them.

The mechanism behind this is not mysterious. AI personalization works because it does what human intuition cannot: it processes thousands of behavioral signals simultaneously, scroll depth, session time, return frequency, price sensitivity patterns, device type, time of day, and identifies micro-segments invisible to even the most experienced marketing team. As our earlier research on AI for CRO demonstrated, this granularity enables brands to serve different trust signals, calls to action, and form structures to different visitor segments in real time, compounding into conversion rate improvements that traditional A/B testing could never achieve at comparable speed.

33%, of consumers say they trust companies with AI-collected data, up from 29% in 2024, 76%, of consumers would switch brands for verified AI data transparency, even at higher cost 82%, of US consumers view AI data loss-of-control as a serious personal threat

The adoption numbers reflect this commercial pull. As of 2025, 92 percent of businesses use AI to drive some form of personalization. The hyper-personalization market is growing at 17.8 percent annually and is projected to reach $21.79 billion by 2026. This is no longer an emerging capability, it is fast becoming an assumed baseline of competitive digital marketing.

And yet, almost in lockstep with this adoption, consumer concern has not diminished. It has deepened.

The Problem: The Personalization-Privacy Paradox

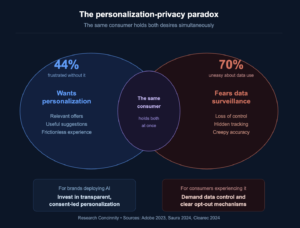

Researchers have a name for the central tension that Sarah experienced: the personalization-privacy paradox. It describes a consistent pattern in consumer behavior, people simultaneously want the convenience and relevance of personalized experiences, and feel deeply uncomfortable about how brands acquire the information that makes that personalization possible

“It feels creepy when the ads are too accurate; it’s like they know me better than I know myself.”, Research participant, Advances in Consumer Research (2025)

An Adobe study found that 44 percent of consumers feel frustrated when brands fail to deliver personalized experiences. But in the same research, 70 percent reported feeling uneasy about how their data is collected and used. These are not contradictory findings. They describe the same person, holding both preferences at once, navigating an experience they never fully consented to understand.

A 2025 survey by Relyance AI of over 1,000 US consumers found that 82 percent view AI data loss-of-control as a serious personal threat, with 43 percent describing it as ‘very serious.’ Only 18 percent did not see it as a significant problem. That is a baseline of near-universal distrust that marketers must reckon with before deploying any personalization strategy.

What is changing is not whether consumers care about privacy, they always have, but their awareness of what is actually happening. As AI systems become more capable, their outputs become more visibly precise. The ad that follows you across platforms, the email that references a life event you never directly shared, the product recommendation that feels more like surveillance than service: these moments surface the data infrastructure that was always operating invisibly. And once surfaced, they are difficult to unsee.

The Ethical Fault Lines: What AI Personalization Actually Puts at Risk

The ethical concerns around AI personalization are often discussed as a single problem, privacy, but they are better understood as four distinct fault lines, each carrying different commercial and reputational risks.

- Data collection without genuine informed consent

Most personalization systems operate on data that consumers technically consented to share but did not meaningfully understand they were sharing. Cookie banners and privacy policies written in dense legalese satisfy regulatory minimums without actually informing anyone. Karami, Shemshaki, and Ali Ghazanfar (2025), in a comprehensive review published in Data Intelligence, identify this as the primary ethical concern in AI-powered digital marketing: the gap between formal consent and genuine comprehension creates systems that are legally defensible but ethically hollow.

- Algorithmic bias amplified at scale

AI systems learn from historical data. When that data reflects existing demographic disparities, who was shown which products, who was extended which credit terms, who was targeted with which offers, the model internalizes those patterns and reproduces them. Research consistently shows that this can result in discriminatory targeting along lines of gender, race, age, and income. At the scale AI personalization operates, these biases do not remain edge cases. They become structural.

- Manipulation masquerading as relevance

Predictive models identify not only what consumers want but when they are most psychologically susceptible to an offer. A consumer researching a health condition, navigating a relationship change, or facing a financial difficulty is statistically detectable from behavioral signals. Targeting them with precision offers during moments of vulnerability is not personalization in any meaningful sense, it is exploitation. Research from Hardcastle, Vorster, and Brown (2025) in the Journal of Advertising identifies ‘perceived dataveillance’ as a significant factor in how consumers experience AI-driven advertising, with many respondents describing the sensation as surveillance rather than service.

- Opacity and the right to explanation

When a personalization algorithm determines that a specific visitor should see a shorter form, a different price, or a competing offer suppressed, neither the marketer nor the consumer typically understands why. Research based on 650 Greek consumers found that trust and ethical perceptions are the most significant predictors of AI personalization acceptance, and that opacity in how AI systems operate is the primary driver of distrust (MDPI, 2025). The EU AI Act directly addresses this with explainability requirements for high-risk AI systems, a category likely to expand to cover more marketing applications over time.

The Regulatory Landscape: Privacy Law Is Catching Up Faster Than Most Brands Expect

Regulation has historically lagged digital marketing practice by a decade or more. That gap is closing. The EU’s General Data Protection Regulation set a global standard in 2018, and enforcement has since scaled significantly, Facebook’s $5 billion FTC settlement in 2019 and subsequent European enforcement actions signal that regulators now have both the appetite and the frameworks to act.

Gartner projected that by 2025, 60 percent of large organizations would use AI to automate GDPR compliance, up from 20 percent in 2023. India’s Digital Personal Data Protection Act, enacted in 2023, adds another significant jurisdiction to the compliance landscape. California’s CCPA continues to tighten data rights for American consumers.

The direction of travel is consistent across geographies: greater consumer rights, higher consent requirements, more explicit transparency obligations, and meaningful penalties for violations. PwC found that 79 percent of CEOs believe ethical AI will be crucial to maintaining customer trust over the next five years, and that belief is increasingly backed by legal obligation rather than aspiration alone.

“Non-compliance with laws like GDPR or CCPA can cost companies millions, but the reputational damage is even harder to repair.”, Industry practitioner, California Management Review (2025)

What the Evidence Actually Shows About Trust and Commercial Performance

A common assumption in marketing is that privacy and personalization are fundamentally in tension, that more of one requires less of the other. The evidence suggests this framing is wrong, and that it leads brands into poor strategic decisions.

The Relyance AI survey found that 76 percent of consumers would switch to a brand with verified AI data practices, even at higher cost. Fifty percent would choose transparency over the lowest available price. These are material commercial differences: brands that build demonstrable trust around data practices can capture market share from those that do not, and at better margins.

McKinsey found that businesses employing anonymized data, stripping out personally identifiable information while retaining behavioral pattern signals, saw a 30 percent improvement in personalization accuracy while maintaining compliance. The implication is significant: the most sensitive personal data is often not the most predictively useful data. Behavioral signals such as session sequence, scroll patterns, return frequency, and content engagement are frequently better conversion predictors than the personal identifiers that create the greatest privacy risk.

Research from MDPI (2025) further found that ‘AI literacy’, consumers’ ability to understand how AI systems are making decisions about them, significantly increases acceptance of personalization. Users who understand that a recommendation engine is surfacing products based on browsing patterns feel less manipulated than users who receive the same recommendation with no explanation. Transparency, it turns out, is itself a form of trust signal.

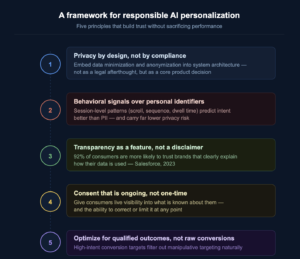

A Framework for Responsible AI Personalization

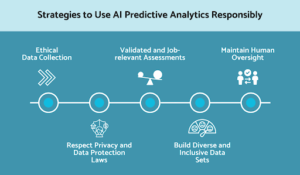

Responsible AI personalization is not about doing less personalization. It is about doing it in ways that build rather than erode the consumer trust that makes personalization commercially sustainable over time. The following principles, drawn from both the academic literature and emerging regulatory guidance, offer a practical framework.

Privacy by design, not by compliance

The weakest form of privacy practice is minimum viable compliance, doing just enough to satisfy current regulatory requirements. The strongest form embeds privacy considerations into the architecture of personalization systems from the beginning: data minimization, anonymization where possible, retention limits enforced by design rather than policy, and consent mechanisms that are meaningful rather than performative. Apple’s App Tracking Transparency framework, which gives users explicit control over cross-app tracking, is the clearest large-scale example of this principle in practice.

Behavioral signals over personal identifiers

The most privacy-preserving personalization systems minimize reliance on persistent personal identifiers and maximize use of in-session behavioral signals. A visitor’s current page sequence, scroll behavior, and engagement patterns tell a predictive model a great deal about their intent without requiring access to browsing history across other platforms or their demographic profile. This approach also tends to produce more accurate predictions for conversion-specific outcomes, because it captures current intent rather than generalizing from historical behavior that may no longer be relevant.

Transparency as a feature, not a disclaimer

Salesforce research found that 92 percent of consumers are more likely to trust brands that clearly explain how their data is used. Framing this transparency as a product feature, ‘here is how we personalize your experience and here is what you can control’, transforms a compliance obligation into a trust-building signal that differentiates the brand. Privacy disclosures buried in footers and written for lawyers are missing a commercial opportunity.

Consent that is ongoing, not one-time

Most personalization consent architectures are front-loaded: a user agrees to data practices once, during account creation or first visit, and that consent is treated as permanent. Consumer expectations are moving toward ongoing consent, the ability to see what is known about you, to correct it, and to adjust what is used for what purposes. Brands that build this capability proactively will be better positioned than those forced to implement it under enforcement pressure.

Optimizing for qualified outcomes, not raw conversions

AI systems optimized purely for conversion rate can identify and target moments of consumer vulnerability in ways that produce short-term results and long-term trust destruction. Systems optimized for qualified, high-intent conversions, the kind we explored in our CRO research, naturally tend toward less manipulative personalization strategies, because they filter out the low-quality interactions that create the perception of surveillance.

The GEO Connection: Privacy Expectations Are Reshaping Discovery Too

The personalization-privacy tension does not live only in the relationship between brands and their existing customers. It is also reshaping how new customers discover brands in the first place, and this connects directly to the shift from traditional SEO to Generative Engine Optimization that we examined in our January 2026 piece.

As we noted in GEO vs SEO: The New Rules of Being Found Online in 2026, nearly 60 percent of searches now end without a click. AI systems synthesize and present information without exposing users to the tracking infrastructure of individual websites. This is, among other things, a privacy behavior, users are choosing AI-mediated discovery precisely because it offers a more anonymous path to information. Brands that want to be cited and recommended by AI search engines will need to demonstrate the kind of credible, substantive expertise that builds AI authority. That same expertise is also the foundation of the trust that ethical personalization requires.

The implication is that privacy-respecting brands and GEO-optimized brands are likely to be the same brands. Both strategies require the same underlying disposition: genuine investment in the consumer relationship rather than extraction from it.

What This Means for Your Marketing Strategy Right Now

For marketing leaders sitting with these realities, the practical question is where to start. Here is a sequenced approach, ordered by urgency.

Audit your data collection against genuine necessity. For each data point you currently collect for personalization purposes, ask: does removing this meaningfully reduce personalization quality, or are we collecting it because it is available? Most teams discover they are holding far more personally identifiable data than their personalization models actually require.

Review your consent architecture for comprehension, not just compliance. Have an actual consumer, not a lawyer, attempt to understand your data practices from your current consent flows. If they cannot explain back to you what you will do with their data and what choices they have, your consent architecture is not meeting the spirit of emerging regulation.

Invest in behavioral personalization infrastructure. Session-level behavioral signals, what a visitor does in the current session, not who they are across all sessions, are often more predictively useful for conversion outcomes and carry far lower privacy risk.

Build transparency into customer-facing communications. Create a simple, human-language explanation of how you use data to personalize experiences, and make it findable, not buried in a footer. Frame it as a value exchange, not a legal disclaimer.

Monitor for ‘creepiness’ signals in customer feedback. The moment when personalization crosses from useful to unsettling is detectable in qualitative feedback, support tickets, and unsubscribe reasons. Build a mechanism to catch these signals early, before they compound into reputational damage.

The Dilemma Is Real. The Path Forward Is Not a Compromise.

Sarah’s inbox moment, the customer who felt seen in the worst possible way, is not a cautionary tale about personalization. It is a cautionary tale about personalization deployed without a corresponding investment in the trust architecture that makes it sustainable.

The brands that will navigate the next decade of AI personalization most successfully are not those that do the least personalization out of caution, nor those that push personalization to its technical limits without regard for consumer experience. They are the ones that treat trust as a product feature, something designed, measured, and continuously improved alongside the personalization engine itself.

AI can identify a consumer’s intent with remarkable precision. The question is whether it uses that precision to serve them or to exploit them. That is not a technical question. It is a strategic one. And the brands that answer it well will find that responsible personalization is not a constraint on competitive advantage, it is the foundation of it.

Frequently Asked Questions

- Isn’t AI personalization just targeted advertising with a better name?

There is meaningful overlap, but also meaningful distinction. Traditional targeted advertising uses demographic and interest-based segments, broad categories that many people share. AI personalization operates at the level of individual behavioral prediction, using real-time signals to infer intent with far greater precision. The difference matters both for effectiveness and for privacy risk: the more precise the targeting, the more powerful the personalization and the greater the potential for it to feel invasive.

- How does GDPR specifically affect AI personalization in marketing?

GDPR creates several direct obligations for personalization systems operating in or targeting the EU. Consent must be freely given, specific, informed, and unambiguous, pre-ticked boxes do not qualify. Consumers have the right to access, correct, and delete their personal data. Automated decision-making that produces significant effects must be explainable on request. Fines of up to 4 percent of global annual turnover apply for significant violations. Similar frameworks now operate in California, India, and across Southeast Asia.

- Can small and mid-sized businesses implement privacy-respecting AI personalization without enterprise budgets?

Yes. Mid-market businesses are often better positioned than large enterprises because they have less legacy data infrastructure to audit and reform. Session-level behavioral personalization is available through accessible platforms like Mutiny, Intellimize, and Optimizely at price points accessible to mid-market teams. The investment required is less technical than organizational: establishing clear data governance policies, building meaningful consent flows, and committing to behavioral-signal-first personalization architecture.

- What is the ‘creepiness’ threshold and how do we know when we have crossed it?

Research consistently identifies precision as the primary driver of perceived creepiness: personalization that accurately reflects something the consumer did not consciously share, inferred health conditions, relationship status, financial stress, crosses the threshold most reliably. Location-level or time-of-day personalization rarely triggers the same response. The practical test is whether the personalization implies knowledge the consumer would not expect the brand to have. Consumer feedback, exit surveys, and unsubscribe reasons are the most reliable early-warning signals.

- How should AI personalization strategies adapt as cookie deprecation progresses?

Cookie deprecation accelerates the shift toward first-party and zero-party data strategies that the privacy framework described in this article already recommends. First-party data (behavioral signals from your own properties) and zero-party data (information consumers actively share with you in exchange for better experiences) are both more privacy-respecting and more durable than third-party tracking. Brands that have built direct consumer relationships and session-level behavioral personalization will find the post-cookie environment more competitive, not less.

- Is there a difference between personalization for B2B and B2C from a privacy ethics perspective?

The principles are the same, but the risk profiles differ. B2C personalization involving consumer health data, financial circumstances, or relationship status carries high sensitivity and is subject to the most stringent regulatory frameworks. B2B personalization, using firmographic signals, job title, and company size to serve different content, typically carries lower personal sensitivity risk, though business email addresses and professional behavior data are still personal data under most regulatory frameworks. The more significant ethical issue in B2B personalization tends to be algorithmic bias in lead scoring.

References

Cloarec, J., Meyer-Waarden, L., & Munzel, A. (2024). Transformative privacy calculus: Conceptualizing the personalization-privacy paradox on social media. Psychology & Marketing, 41(7), 1574–1596.

Gartner. (2023). Top strategic technology trends: AI governance and privacy automation. Gartner Inc.

Hardcastle, K., Vorster, L., & Brown, D. M. (2025). Understanding customer responses to AI-driven personalized journeys: Impacts on the customer experience. Journal of Advertising, 54(2), 176–195.

Karami, A., Shemshaki, M., & Ghazanfar, M. A. (2025). Exploring the ethical implications of AI-powered personalization in digital marketing. Data Intelligence, 7, Art. No. 2025r32, 1035–1084.

McKinsey & Company. (2022). The value of getting personalization right — or wrong — is multiplying. McKinsey & Company.

McKinsey & Company. (2023). The state of AI in 2023: Generative AI’s breakout year. McKinsey & Company.

PwC. (2023). Responsible AI: From principles to practice. PricewaterhouseCoopers International.

Salesforce. (2023). State of the connected customer (6th ed.). Salesforce Inc.

Salesforce. (2024). State of marketing report (9th ed.). Salesforce Inc.

Serdaris, P., Antoniadis, I., & Spinthiropoulos, K. (2025). Personalization, trust, and identity in AI-based marketing: An empirical study of consumer acceptance in Greece. Administrative Sciences, 15(11), 440.

Victor-Nyebuchi, M. (2025, June 13). The impact of AI-driven personalization tools on privacy concerns and trust in social media marketing [SSRN Working Paper].

Zuboff, S. (2019). The age of surveillance capitalism: The fight for a human future at the new frontier of power. PublicAffairs.